So, what did you get up to on the holiday break? I was intending to spend a bit of time catching up on my horribly late slate of projects, but I ended up going down yet another rabbit hole – and this one’s quite intriguing. Cyberpunk 2077 on PC ships with a range of internal benchmarks and streaming tests, only one of which users actually get to see. However, several others are available and thanks to a quirk of CD Projekt RED’s cross-platform save system, it’s possible to port those benchmark sequences across to consoles. I wasn’t exactly optimistic that they would work – but they do. The question is, are they in any way useful?

CDPR’s ‘official’ benchmark and a range of others are accessible in the PC version of the game and by my count, four of them auto-save during their duration, or else allow you to make a manual save as they start. This makes them exceptionally easy to access on the PC game (you load them like any other save game) but it also means that they transfer across once you log into CDPR’s online network.

Under this system, the last manual save, quick save and auto save move from system to system, and that’s how I managed to ‘port’ across PC’s benchmark sequences across to consoles. Unfortunately, saving progress isn’t possible in the official benchmark available from the main menu, but it’s arguably less interesting than the others anyway.

So how good are they as actual benchmarks – and to what extent do they reveal actual differences between the consoles? It should go without saying that with the consoles running their RT modes, or quality mode in the case of Xbox Series S, all of these benchmarks basically lock to their target 30 frames per second, which highlights a limitation of porting a PC benchmark across to a console. Typically, a benchmark is used with an unlocked frame-rate, so components can be pushed to their maximums. Console experiences are more dedicated to consistency and that’s exactly what the RT mode delivers in these benches: a new frame for every second refresh on a typical living room display. In the benchmark content at least, they run nigh-on flawlessly.

In Cyberpunk 2077’s 60fps performance mode though, there are differences. In three of the four benchmarks, PS5, Xbox Series X and Series S are quite closely matched – with just the sense that the Series X isn’t quite as solid with its lock to 60fps. In a fourth benchmark though, the load increases and PlayStation 5 can run at a maximum of 12fps to the better compared to Series X.

Previously, there’s been some level of assumption that the performance mode may be CPU limited (which makes the Xbox result odd to explain – it has more CPU resources available than PS5). However, Series S running with a reduced resolution is able to out-perform Series X, so the GPU is the more likely explanation. With a 60fps cap in place, standard benchmarking metrics aren’t especially valid but consistency can be measured – our sample of this benchmark is 15,500 frames long. And where there are duplicate frames, we’re deviating from consistency.

Quite why there are differences between Series X and PS5 is curious – you’d expect Xbox to be faster, or broadly on par based on everything we’ve learned from the consoles this generation. The quality presets chosen by CD Projekt RED for PS5 and Xbox Series X are basically identical. However, while both use FSR 2 upscaling to reconstruct to 1800p, the dynamic resolution ranges do differ: Xbox Series X’s DRS range is 1152p to 1440p, while PS5’s equivalent is 1008p to 1440p. We think Xbox may be using variable rate shading (VRS) but if it is, the boost to performance seems minimal. The more aggressive DRS window on PlayStation 5 seems to translate to better performance – and we’d argue that matching that range on Xbox Series X might be a good move for the developer.

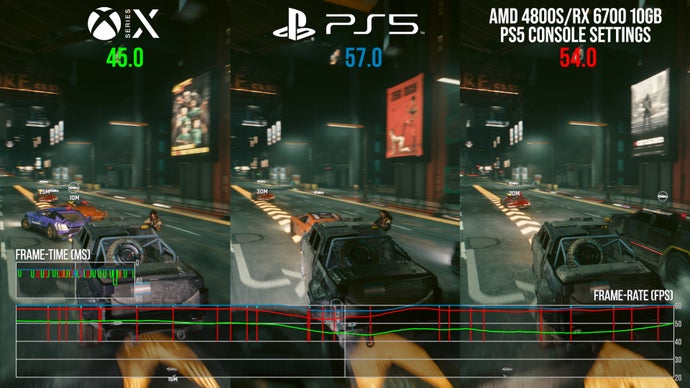

Completing my journey down the rabbit hole, I transferred across equivalent settings from the console version’s performance mode to PC (a hat tip to Mohammed Rayan for the assist here) and tested out the same benchmarks on a computer built from consoles parts, or close equivalents: the AMD 4800S desktop kit, built on the Xbox CPU, along with the RX 6700 10GB. This slightly obscure AMD GPU has very similar specs to the PS5 GPU – memory interface and infinity cache aside. Matching frequencies to the PS5’s 2.23GHz, our console-like PC is almost as fast as PS5 and again, faster than Series X.

Swapping in a mammoth RX 7900 XTX eliminated all performance issues. I also tweaked the dynamic resolution ranges on the RX 6700 runs to match both PS5 and Series X, finding that in both scenarios, the PC was faster than Series X for the most part (but not by much on matching Xbox settings) while PS5 was a little more consistent still. Perhaps the different APIs or GPU compilers made a difference, or perhaps the dynamic resolution formula is different between consoles and PC (it often is in games that support DRS on both systems). Even so, the conclusion does suggest that maybe Xbox could stand to improve from the option of a wider DRS window.

So, forgive me for indulging myself on this one. While the exercise is mostly academic, the results are still interesting – we’ve known about the internal tests for some time, but there was never any guarantee that they’d operate on the consoles at all. There is still a divide between PC and individual console builds, after all. However, they do work – albeit within the settings confines and frame-rate limits set by the developer. And now I’m wondering if there’s anything similar in the Phantom Liberty expansion and perhaps even The Witcher 3. Perhaps I’ll find time to look into it during my next Christmas break.